Since the dawn of the digital age, decision-making in finance, employment, politics, health and human services has undergone revolutionary change. Today, automated systems—rather than humans—control which neighborhoods get policed, which families attain needed resources, and who is investigated for fraud. While we all live under this new regime of data, the most invasive and punitive systems are aimed at the poor.

The U.S. has always used its most cutting-edge science and technology to contain, investigate, discipline and punish the destitute. Like the county poorhouse and scientific charity before them, digital tracking and automated decision-making hide poverty from the middle-class public and give the nation the ethical distance it needs to make inhumane choices: which families get food and which starve, who has housing and who remains homeless, and which families are broken up by the state. In the process, they weaken democracy and betray our most cherished national values.

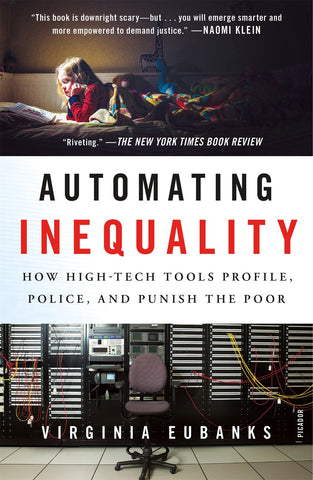

This deeply researched and passionate book could not be more timely.

A powerful investigative look at data-based discrimination—and how technology affects civil and human rights and economic equity. The State of Indiana denies one million applications for healthcare, foodstamps and cash benefits in three years—because a new computer system interprets any mistake as “failure to cooperate.” In Los Angeles, an algorithm calculates the comparative vulnerability of tens of thousands of homeless people in order to prioritize them for an inadequate pool of housing resources. In Pittsburgh, a child welfare agency uses a statistical model to try to predict which children might be future victims of abuse or neglect.